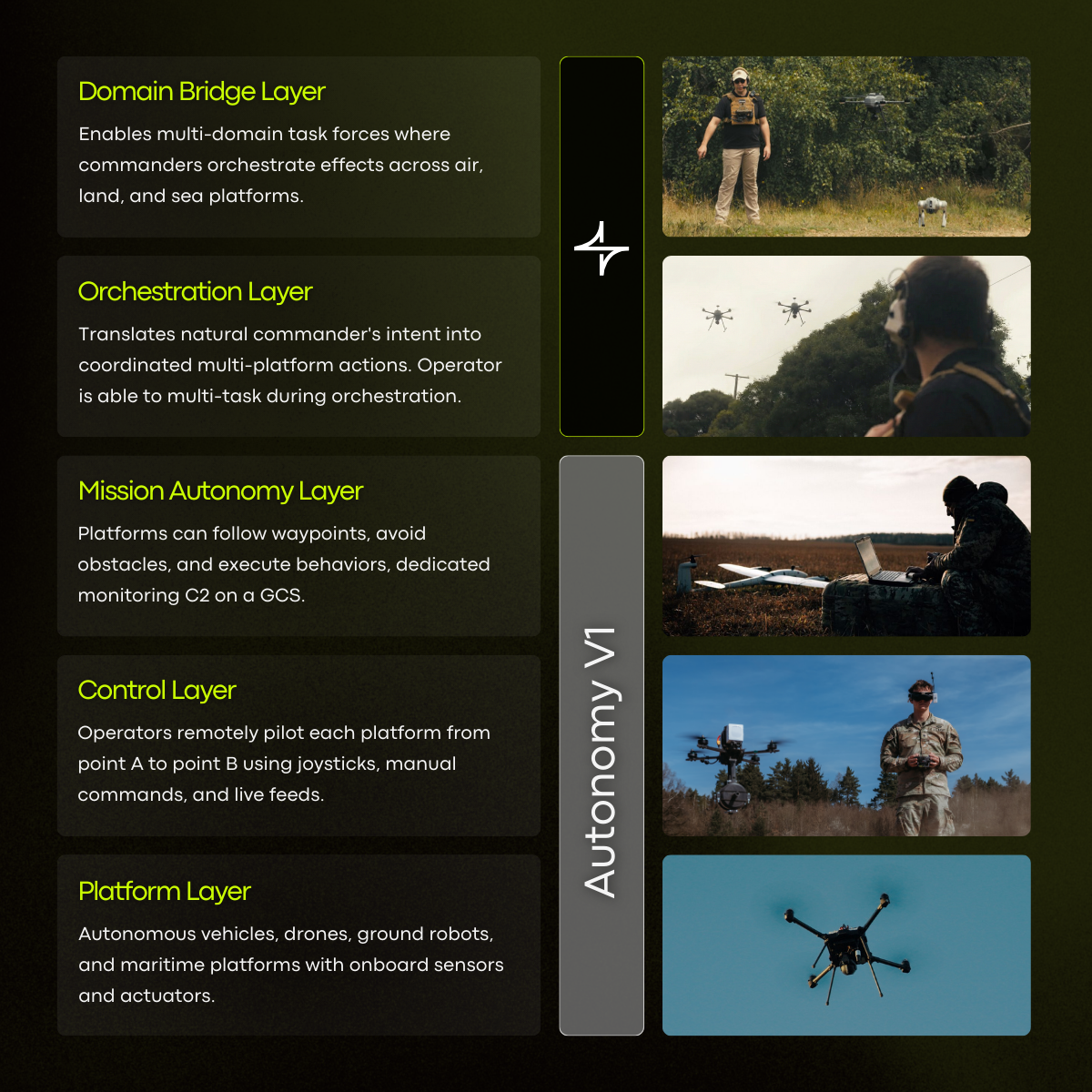

The 5 Layers Of The Modern Autonomy Stack

We Forgot To Start With The Human.

The past decade of autonomous systems development has been genuinely impressive. Drones that fly themselves. Ground robots that navigate complex terrain. Maritime platforms that patrol autonomously for hours. The hardware has never been more capable.

And yet, more often than not, a single operator still manages a single platform: head down in a screen, thumbs on a joystick, completely removed from the broader mission unfolding around them, or unable to do much else at all.

The technology advanced. The operator bottleneck didn't.

The reason comes down to a simple but overlooked problem: the industry has been building up from the bottom of the stack. Starting with the machines, and working their way up toward the operator. When in reality, if you want to build the ultimate human-machine team, you need to start with the human and work your way down to the machine.

People don't think in ones and zeros. We don't think in battery percentages, waypoints, and button presses, especially not under stress. We think in intent. We shout information at the teammates next to us when things are changing fast. There are more situations where an operator can't use a screen than ones where they can. So even if you could build the ultimate UI for orchestrating teams of autonomous systems, should you?

This article explores what Breaker is proposing as a new autonomy stack: how it fits into the existing landscape of systems, why the top two layers have been left largely unsolved, and why closing that gap matters for the West's ability to win.

Layer 1: The Platform Layer

This is the hardware: the physical machines themselves. UAVs, UGVs, USVs, ground robots, and maritime platforms equipped with sensors, cameras, and actuators. The Platform Layer has attracted the most investment and attention, and rightfully so. You can't build anything without the machines.

Dozens of companies across the defense and commercial sectors have made enormous strides here. Platforms are faster, more reliable, more capable, and cheaper than ever before.

Status: Mature.

Layer 2: The Control Layer

Once you have a platform, someone needs to fly it, drive it, or steer it. The Control Layer covers direct teleoperation: operators remotely piloting each platform from point A to point B using joysticks, manual commands, and live video feeds.

This is the layer most people picture when they think of drone warfare: a person with a controller, a screen showing the camera feed, and very direct, very deliberate machine control.

It works. But it scales terribly. One operator, one platform, total attention required.

Status: Mature.

Layer 3: The Mission Autonomy Layer

This is where onboard intelligence begins to appear. Platforms at this layer can follow pre-programmed waypoints, detect and avoid obstacles, and execute conditional behaviors: if this, then that. A dedicated Ground Control Station (GCS) monitors and manages the platform's autonomous execution.

Mission Autonomy is genuinely useful. It frees the operator from continuous joystick input and enables basic scripted behaviors.

But it has a hard ceiling. The behaviors have to be pre-programmed. The system can't adapt to unstructured situations. And critically, someone still has to be watching a GCS screen, often miles behind the front line, ready to intervene.

Modern conflict has exposed exactly how fragile this model is. In environments like Ukraine, communications are denied or degraded constantly. When the intelligence and decision-making sits off the platform, centralized in a GCS or cloud server, the system becomes only as good as its connection. The moment comms go out, the platforms go dark. Operators on the front line are left with hardware that can't think for itself, and controllers who can no longer reach it. A single point of command is a single point of failure.

Status: Mature, but with a hard ceiling.

Layer 4: The Orchestration Layer

Here is where the gap begins. The Orchestration Layer is the capability that translates a commander's intent into coordinated, real-time action across multiple platforms simultaneously. Not pre-scripted waypoints: actual intent. "Search Area Bravo, then establish overwatch on Route Zulu." "Follow that truck." "If you see movement at the northern perimeter, report it immediately."

This layer requires understanding natural language, reasoning about mission context, assigning tasks intelligently across a fleet, and adapting when conditions change, all without requiring the operator to manage each platform individually.

Without the Orchestration Layer, multi-platform operations require multiple operators. One drone, one person. Five drones, five people. The math doesn't work in environments where personnel are the scarcest resource.

The defense industry has largely ignored this layer because it's genuinely hard. It requires AI systems capable of real-time reasoning, voice comprehension in degraded acoustic environments, and decision-making that can be trusted to act without catastrophic hallucinations. Until recently, that wasn't buildable. Now it is.

Status: Largely absent. The first critical gap.

Layer 5: The Domain Bridge Layer

If the Orchestration Layer coordinates a single fleet, the Domain Bridge Layer coordinates across multiple fleets operating in completely different domains: air, land, and sea simultaneously.

Modern operations rarely happen in a single domain. A UAV team conducting ISR needs to hand off targeting data to a ground team. Maritime platforms need to synchronize with aerial overwatch. A single commander needs a unified operating picture that spans every asset in the battlespace, regardless of what kind of machine it is or what API it runs on.

The Domain Bridge Layer is what enables true multi-domain task forces, where a commander can issue effects-based intent and have it execute intelligently across a heterogeneous, cross-domain fleet. Without it, each domain remains a silo, each with its own operators, its own screens, and its own cognitive overhead.

Status: Almost entirely absent. The second critical gap.

Why These Two Layers Were Skipped

It's not that the industry didn't recognize the need. It's that the technical prerequisites weren't available, and the problem is significantly harder than it looks.

The compute problem.

Enterprise AI runs on data centers. Robots run on batteries. A solution that requires cloud connectivity fails the moment communications are denied, which is precisely when it's needed most. Building AI that runs entirely onboard a 25-watt UAV compute unit, in the desert sun, with zero external dependencies, is a fundamentally different engineering challenge than building a chatbot.

The hallucination problem.

AI systems hallucinate. In a consumer application, a hallucination is an annoyance. In an autonomous weapons system, it's a mission-ending failure, or worse. Any system operating at the Orchestration or Domain Bridge layer has to solve for hallucination exposure before it can be trusted with real-world missions.

The usability problem.

Operators can't use screens or controllers while driving vehicles, returning fire, or operating in weight-restricted dismounted roles. Any interface that requires hands or eyes is already a broken interface. The Orchestration Layer has to work over voice, on existing radio links, in noisy environments, with accents and ambient combat noise, or it doesn't work at all.

And that voice interface has to go both ways. Issuing a command is only half the problem. Operators need the system to talk back: confirming what it heard, reporting what it found, flagging when something changes. A system that listens but doesn't respond puts the operator back in the same position they started in, uncertain whether the mission is executing, whether the platform understood, and whether anything is actually happening out there.

How Avalon Closes the Gap

The Defense Innovation Unit's Autonomous Vehicle Orchestrator challenge defined a clear set of requirements for what a true orchestration layer needs to do: interpret operator intent, coordinate multi-platform action, fuse data across a fleet, enforce mission constraints, and operate under degraded communications. These weren't easy bars to clear. But they have set the standard that the industry will now start working towards.

Avalon meets all of them, today. Demonstrated across multiple real-world engagements, it has proven the ability to translate natural language intent into coordinated multi-platform action across air, land, and sea, running entirely onboard with zero cloud dependency.

USASOC evaluated the system and put it simply: "You simply talk to it as if it were someone on your team. None of the other AI or verbal controlled systems can do that."

But in building and testing Avalon in the field, Breaker has identified several critical requirements the challenge didn't fully capture.

The two-way voice problem. The challenge focused on intent going in. It didn't fully account for what needs to come back out, and how. Operators need continuous, spoken feedback: confirmation of commands, proactive situation reports, and plain-language explanations of what the system is doing and why. Silence is not an acceptable system state.

The trust problem. Deploying AI in mission-critical environments requires more than capable models. It requires an architecture that prevents AI reasoning errors from becoming real-world failures. This demands a fundamental separation between how the system thinks and how it acts.

The scaling problem. A solution that works on one platform type, or one compute budget, isn't a solution. It needs to run on the lowest-powered UAV in the fleet just as well as it runs on a ground vehicle with ten times the compute available.

These are the problems Breaker has spent two years solving.

The Bigger Picture

The autonomous vehicle operator bottleneck is, right now, one of the most expensive capability gaps for the DoW. The platforms exist. The autonomy exists. What's missing is the intelligence layer that lets a small team command a large fleet: the layer that makes a single operator as capable as an entire team of specialists used to be.

The bar has to be set accordingly. What works in a demo but fails in a degraded, contested environment like Ukraine is not a battle-ready solution. The operators who need autonomy most are the ones driving, flying, and fighting at the same time. Any system that can't serve them, in those conditions, isn't ready.

Layers 1 through 3 gave defense better tools. Layers 4 and 5 give defense better teammates.

That's what Breaker is building.